The future lies in personal learning networks and paths, learning that blends experiential and digital approaches, and free and open-source educational models.

—Anya Kamenetz

DIY U: Edupunks, Edupreneurs, and the Coming Transformation of Higher Education

Over the past six weeks I have been participating in the OERu’s Digital Literacies for Online Learning course (LiDA 101). I recently completed the final Learning Pathway, “Learning in a Digital Age.” Upon completion of this module I was able to use SimpleNote a digital, open-access note taking tool, summarize an academic publication to support my research, identify a range of academic and study skills in a mind-mapping exercise, and confidently discuss the future of higher education in a digital age with particular emphasis on the implications for academic and study skills.

Due to my other teaching commitments, administrative duties, and research responsibilities I was unable to complete this module as quickly as I had hoped. This meant that each time I started I had to review the previous material to ensure that I was able to move on. Fortunately, this allowed me to spend a bit more time thinking about the material presented and also connecting some of the ideas to the current state of higher education as impacted by the global pandemic and sudden pivot to remote delivery and online teaching.

Which brings me to the realization of how closely linked many of the ideas I have been learning about in this course, are to some of my other research interests. In her TEDx Talk “DIY U” Anya Kamenetz explained the 2010 crisis facing post-secondary institutions and the disruptions they faced. I teach at a community college so many of her comments on the need for open education to support contract academic faculty and make resources more affordable for students deeply resonated with me. We have come along way toward realizing some of Kamenetz’s visions (especially in BC where open education has been embraced), however nearly 10 years later we are not that much further from the structural challenges Kamenetz outlined. Fortunately, however, the unique circumstances of 2020 may be forcing an unprecedented disruption to how we teach and learn in a way that will provide the opportunity to more fully realize the open, tech enabled communities of practice she advocated for in her presentation.

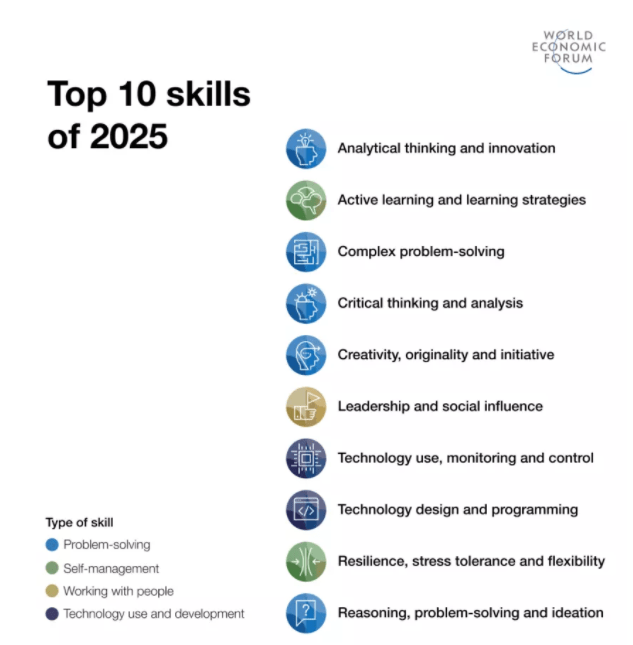

When researching academic skills for learning success I remembered a LinkedIn post one of my colleagues recently shared (figure 1). According to the World Economic Forum’s “Top 10 skills of 2025” the jobs of tomorrow will require problem solving, self-management, working with people, and technology use and development skills. Seeing these competencies made me think about how important it is for us to capitalize on the opportunities presented by the pivot to online learning. It left me wondering more about how these skills could be further cultivated by a radical rethinking of our core beliefs about teaching and learning and need for drastic systemic change in post-secondary education.

I am grateful for the tech skills and research methods I have learned in LiDA 101. Stay tuned for my next research project!